Blog

Case studies, strategies, and ideas shaping modern technology.

Coinbase, A Year On: The $400M Security Gap

A year after the $400 million Coinbase breach, the industry is still nursing a rather expensive collective migraine. But the headache isn't coming from a technical failure – it’s coming from the realisation that modern attacks are rarely about breaking systems. Instead, they are about using them exactly as they were designed.

It wasn't a failure of encryption; it was a failure of assumption. Traditional security models are built to stop unauthorised entry, yet they remain remarkably polite and silent when access appears legitimate. In the world of high-stakes finance, that gap is where the most devastating real-world losses occur.

At Mesoform, our philosophy against this is simple but critical: Don’t just secure the access; secure the outcome.

What actually happened

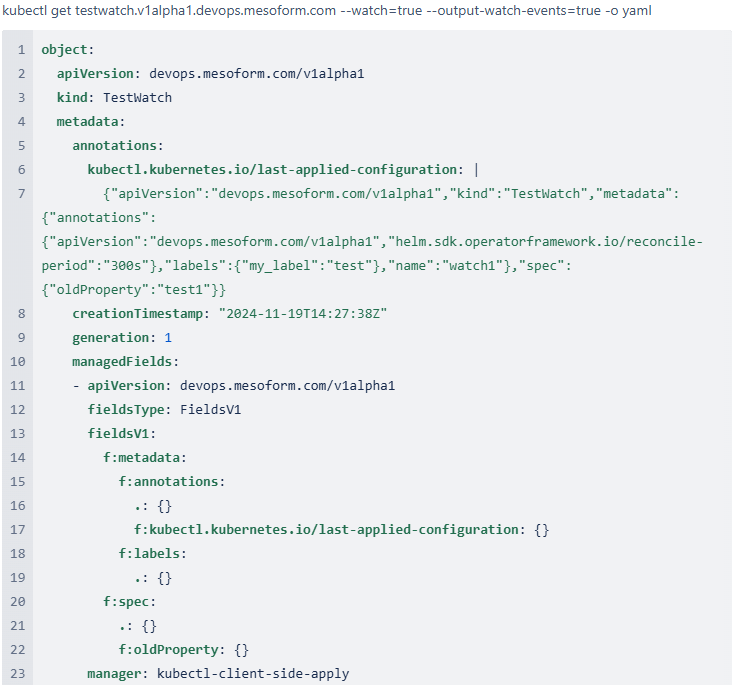

Attackers obtained personal data on over 69,000 customers at Coinbase. No wallets, private keys, or core trading systems were directly compromised. Instead, the breach exposed a softer operational layer: customer support.

Access came through overseas agents working for a third-party provider, TaskUs. These individuals had legitimate access to internal tools and customer records. Through bribery and social engineering, attackers persuaded them to extract and pass on sensitive data.

That information was then used externally, not to break systems, but to impersonate them. Armed with accurate personal and account context, attackers contacted users directly and guided them through actions that resulted in fund transfers.

The key shift is simple: nothing was technically breached, but enough context was exposed to make deception highly effective.

Who was behind it

The breach didn't involve a sophisticated exploit of core trading systems or private keys. Instead, it targeted the "softer" operational layer: customer support.

- The Entry Point: Access came through overseas support agents working for a third-party provider, TaskUs.

- The Method: Through a mixture of bribery and social engineering, these individuals were persuaded to extract and pass on sensitive data.

- The Pivot: This information was used externally, not to break systems, but to impersonate them. Armed with accurate account context, attackers guided users through actions that resulted in fund transfers.

Technically, nothing was breached – but enough context was exposed to make deception highly effective. It’s the digital equivalent of someone stealing your house keys, not by picking the lock, but by convincing the concierge they're your long-lost cousin from Darlington.

The "Gig Economy" of Cybercrime

We aren't dealing with a single, structured group anymore. This is the "gig economy" of the underworld. Coordination happens on platforms such as Telegram and Discord, where specialists contribute specific expertise – whether it’s high-fidelity impersonation or insider targeting.

The barrier to entry is no longer technical depth; its coordination and the exploitation of human weakness. Capability is distributed across a chain of contributors, making the traditional "perimeter" model look a bit like a picket fence in a hurricane.

Where it broke

The entry point was not core infrastructure, but a third-party support environment. This matters because support functions are not peripheral, they are embedded into operational workflows and carry legitimate access by design.

Attackers exploited this reality. Once inside this layer, they operated within expected permissions. Nothing appeared anomalous at a system level because, technically, nothing was abnormal. The compromise existed in the operational layer, an area most platforms rarely treat as part of the active attack surface.

When it unfolded

The compromise began in late 2024 and unfolded gradually. Data was extracted and refined into usable profiles over months. By the time the breach was disclosed in May 2025, the exploitation phase was already well underway.

This separation between initial access and visible impact is critical. Modern breaches are not always "smash-and-grab" intrusions; they are extended campaigns where value is slowly accumulated before detection.

Why it worked

The breach succeeded because trust was never technically broken; it was assumed.

Once attackers had enough contextual data, every interaction appeared legitimate. Authentication passed, support flows matched expectations, and nothing triggered traditional controls.

From a system perspective:

- Identity was valid

- Processes were followed

- Interactions were expected

But the intent behind those actions had been fully manipulated outside the platform boundary.

The system did not fail in execution. It failed in interpretation. Even Brian Armstrong confirmed that core wallets and infrastructure held firm. And yet, funds still moved.

And that is where modern loss actually occurs: not when systems are broken, but when they correctly process the wrong intent.

Even Brian Armstrong confirmed that core wallets and infrastructure held firm. And yet, funds still moved.

This exposes a gap many continue to underestimate: if a user is sufficiently convinced or pressured, they can be guided into authorising transactions that the system sees as valid. Most platforms ask a binary question: Is this user authenticated? Very few ask the harder one: Should this action be allowed, even if the user appears legitimate?

One year on: Where is Coinbase now?

A year after the May 2025 disclosure, Coinbase is navigating a complex recovery. While they have committed to reimbursing victims and refused to pay the $20 million ransom, the structural fallout continues:

- The Support Hub Pivot: In response to the TaskUs incident, Coinbase has begun opening new support hubs in the US, attempting to bring sensitive data access back "onshore" where oversight is tighter.

- The "Everything App" Risk: CEO Brian Armstrong has doubled down on a 2026 roadmap to turn Coinbase into a global "everything exchange" for stocks, crypto, and payments. However, critics argue that expanding the feature set increases the attack surface before the underlying support vulnerabilities are fully resolved.

- Persistent Insider Threats: As recently as early 2026, smaller-scale breaches involving rogue contractors continue to surface. It proves that as long as humans have access to data, they remain the primary target for bribery and extortion.

A shift in how platforms need to think

The most significant shift hasn't been in how we lock the door, but in how we verify why someone is walking through it. We've seen a move from "Identity-Centric" to "Outcome-Centric" security.

1. Zero Trust 2.0: Beyond Access

By 2026, the "Never Trust, Always Verify" mantra has evolved. It no longer just applies to the user, but to the intent of the action.

- Dynamic Risk Decisions: Static access policies are being replaced by adaptive engines that evaluate device health, sign-in behaviour, and historical transaction patterns in real-time.

- Micro-segmentation of Intent: New frameworks isolate data stores into zones where only specific "service calls" are authorised, meaning even a compromised support agent cannot pull a full database of 70,000 users.

2. Intervention at the "Transfer Stage"

Industry standards are now pivoting toward Intervention at the Stage of Irreversibility. Rather than generic pop-up warnings that users click past through "authentication fatigue," platforms are conceptualising targeted risk warnings triggered by behavioural signals – such as a user being coached through a transaction mid-interaction.

3. Agentic Payments and the x402 Protocol

Perhaps the most "Coinbase-specific" development is their work on the x402 protocol.

- This is an open payment protocol designed for AI agents to pay each other directly using stablecoins (like USDC).

- While this allows for "Agentic Commerce," it introduces a terrifying new attack surface: autonomous adversary agents capable of running entire hyper-personalised phishing campaigns without human oversight.

4. The Legislative Response

Closer to home, the UK Government has launched a new system-wide approach to combatting the "gig economy" of cybercrime.

- The Online Crime Centre: Launching in Q2 2026, this public-private hub is designed to share data in real-time to eliminate online fraud at scale.

- DORA and NIS2: New regulatory overlays (like the Digital Operational Resilience Act) are forcing financial platforms to document not just their security tools, but their "measurable recovery capability" and third-party concentration risks.

Moving Beyond Binary Security: Our Approach to the "Intent Gap"

Most platforms are preoccupied with a binary question: "Is this user authenticated?" It is a bit like checking if a man has a key to the house without asking why he’s currently carrying your television out towards the front door – polite, but ultimately unhelpful. The hard truth of the Coinbase breach is that the system did not fail in execution; it failed in interpretation. It correctly processed the wrong intent because, from a system perspective, the identity was valid and the processes were followed.

At Mesoform, we operate on the principle that trust can be manufactured, and social engineering is an environmental constant rather than a technical anomaly. We don’t just secure the access; we secure the outcome. This shift in focus changes the design problem from preventing entry to controlling what happens once access is already assumed.

In a similar situation, our methodology involves designing a "fail-safe" layer that sits directly between user action and the movement of funds. This layer operates above existing security systems at the point where decisions become irreversible.

When we build for financial or regulated environments, our framework would dictate a set of possible rules in the design:

- Independent verification: Ensuring that high-risk withdrawals are validated outside the primary session to break the attacker's control.

- Behaviour-aware checks: Assessing whether an action aligns with historical user patterns and expected behaviour – because sudden, high-impact changes rarely happen in a vacuum.

- Secondary approval flows: Implementing flows that operate through separate channels to mitigate the risk of a single-session compromise.

- Controlled friction: Introducing deliberate "speed bumps" at the point of fund movement, particularly for anomalous or high-impact transactions.

The goal is not to block all risk – that would make the system unusable – but to ensure that even when upstream systems fail, or data is exposed, the financial outcome is still controlled. We design systems that assume exposure is inevitable and build control at the point where it matters most: the movement of value.

Why is this different?

Traditional security models are built around perimeter defence and anomaly detection. The assumption is that once a user is authenticated, their actions can be trusted unless something looks clearly abnormal.

The problem is that modern attacks rarely look abnormal from a system perspective. They look like legitimate behaviour driven by manipulated intent.

What we’ve built instead assumes:

- Data exposure is possible

- Social engineering is inevitable

- Trust can be manufactured

Rather than trying to eliminate those conditions, the design focuses on ensuring they do not translate into financial loss.

This is not about adding more alerts or dashboards. It is about introducing a decision layer at the exact point where intent becomes impact.

Build for what actually gets exploited

At Mesoform, we design systems that assume exposure, manipulation, and social engineering are part of the environment, and build control at the point where it matters most: the movement of funds.

If you want to see how we approach that in practice, visit https://www.mesoform.com/